Efficient docker containers with multi-stage builds

Build efficient docker containers by leveraging multi-stage builds and only include what you need to create high-performing, secure and lightweight images for your applications.

Following on from my previous post in the series of running containers in the cloud, let’s talk building the container images. During development, it’s tempting to get started with the first available documentation or the simple and path of least resistance forward to start development. In my case, building my rails app I started off with FROM ruby:latest. Then you need to go through the installation of some dependencies, copy your application code across, then write an entrypoint or cmd to boot your image.

This is all well and good however, you end up with a lot of cruft you don’t actually need once your app is built and can end up with oversized images and bloat. This has some annoying side effects:

The image size being larger than it needs to be, creating increased costs for storage on your remote docker repository.

There will be left over dependency libraries needed to install the dependencies in the first place. For example, if you need to install some particular gems, you’re going to need pre-existing binaries installed in order to install that gem. Or in the case of the asset pipeline, if you need to compile javascript, you’re going to need node installed, then all the node_modules just to compile and minify your javascript.

Keeping extra cruft in your docker image as outlined above, and by using an image like debian or ubuntu (or whatever) is the default for

ruby:latestyou have installed … things … for lack of a better term sitting around which can create problems and be exploited via known CVEs.Lastly all these things consume space, and some consume memory and cpu cycles. This has an effect of slower boot times for your containers and require more resources to run in the cloud. Since most clouds are pay-for-the-resources-you-consume-model, this costs your wallet more.

What can we do?

Multi-stage Docker builds has entered the chat room

Multi-stage docker builds

At it’s core, a multi stage docker build is essentially enabling you to end up with a single docker image, taking only what you need between stages. It looks like two dockerfiles smashed together into one.

Here’s mine which I wrote for my rails app:

FROM ruby:2.6.6-alpine as builder

RUN apk update && apk upgrade

RUN apk add --update alpine-sdk nodejs yarn sqlite-dev tzdata && rm -rf /var/cache/apk/*

ENV APP_HOME /app

RUN mkdir $APP_HOME

WORKDIR $APP_HOME

COPY Gemfile* $APP_HOME/

ENV RAILS_ENV=production

ENV SECRET_KEY_BASE mykey

RUN bundle install --deployment --jobs=4 --without development test

COPY package.json yarn.lock $APP_HOME/

RUN yarn install --check-files

COPY . $APP_HOME

RUN bundle exec rake assets:precompile

RUN rm -rf $APP_HOME/node_modules

RUN rm -rf $APP_HOME/tmp/*

FROM ruby:2.6.6-alpine

RUN apk update && apk add --update sqlite-dev tzdata && rm -rf /var/cache/apk/*

ENV APP_HOME /app

RUN mkdir $APP_HOME

WORKDIR $APP_HOME

COPY --from=builder /app $APP_HOME

ENV RAILS_ENV=production

ENV SECRET_KEY_BASE mykey

RUN bundle config --local path vendor/bundle

RUN bundle config --local without development:test:assets

EXPOSE 3001:3001

CMD rm -f tmp/pids/server.pid \

&& bundle exec rails db:migrate \

&& bundle exec rails s -b 0.0.0.0 -p 3001

To start you name the stages except for the last stage that you want to use to bring assets between in order to reference them. In my case, I’m only using two steps. I start by naming the first step “builder” and the first thing I do is install system dependencies which I’m going to need to build stuff. In this case, I install nodejs, yarn, sqlite and so on.

Then I copy over the bare minimum from my application code, which in this case is two files, the Gemfile and the Gemfile.lock files over to the image to use them to install my gems. You’ll note that I use the --deployment flag as I want to install the gems to the working directory. For ruby, this is put inside a vendor/ folder. Next I run a yarn install with a check files flag as well as precompile my assets into their minified forms.

Once done, the builder image will be built and we’re onto the next step in the process. The only important part that’s different to what you may be used to in normal docker builds is the COPY command where I pass in an argument --from=builder as this denotes the previous stage of the docker build process that we want to use. What happens here is that we are copying over the pre-built gems and minified CSS and Javascript files which we made in the previous step.

Then we run some ruby specific stuff, like bundle config which tells rails to use gems which have their libraries in a specific location rather than the default.

Outcome

So this journey has had a number of neato side-effects which I outlined as problems before, but never really thought about until after I went through. Eg, the higher compute requirements. I seemed to remember somewhere somehow that rails was quite a heavy framework, so naturally it needs more compute than some lighter applications like Go or Node. I now am guessing that this applies to more mature applications which have many more dependencies, as I was remarkably surprised with the results.

Security

Firstly I’ll just point out that for the eagle-eyed readers among you, I switched to using alpine. Alpine linux is an OS with an absolutely tiny footprint. So small, infact that the whole system could fit on three floppy disks, as it’s only 3.98MB in size!

Additionally, according to their website:

Alpine Linux is a security-oriented, lightweight Linux distribution based on musl libc and busybox.

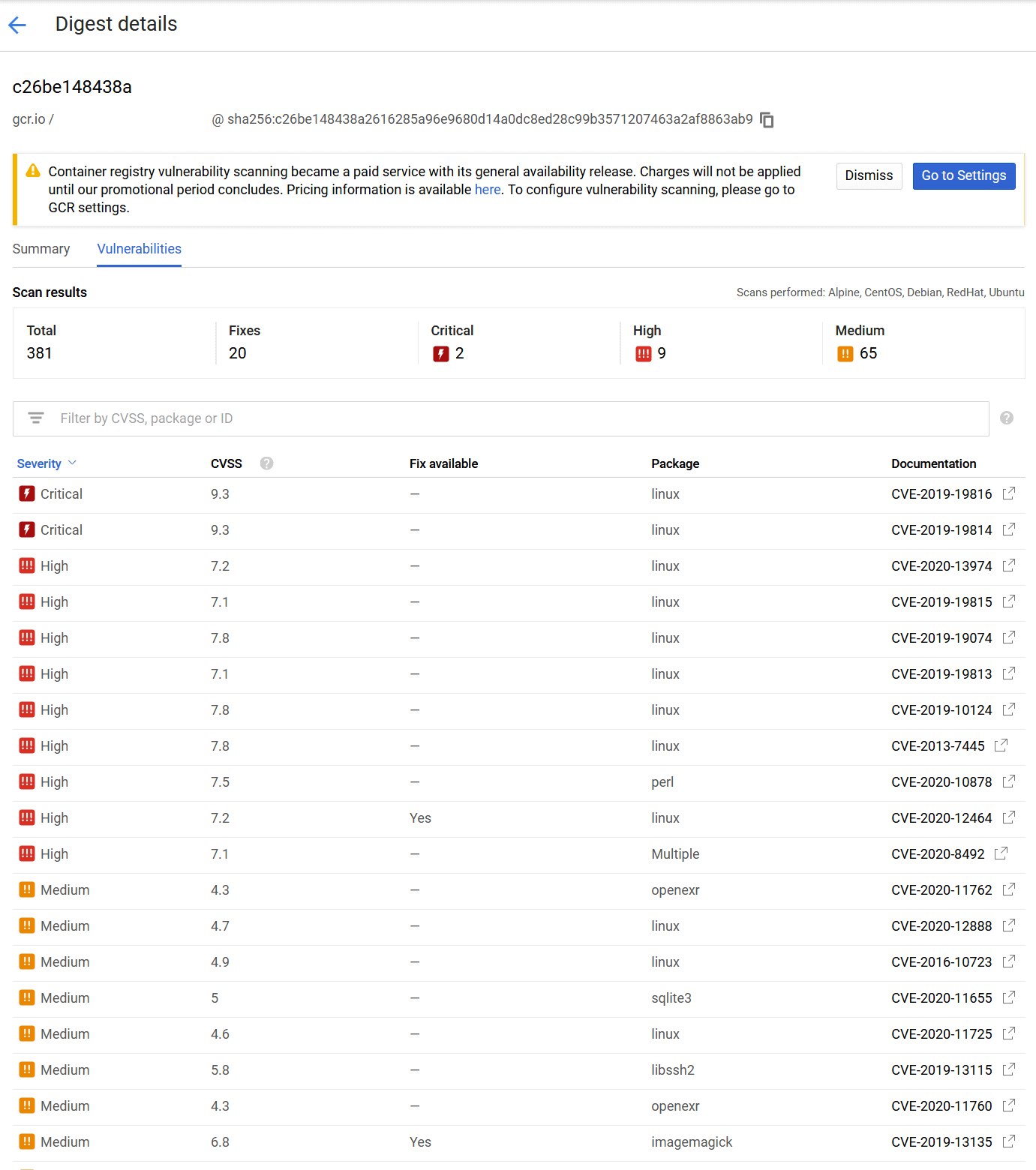

Check out this CVE report from a vulnerability scan on GCP.

The above image shows all the vulnerabilities my ruby container was exhibiting before the change. In total there was 381 items found in the vunlnerability list. 20 of them had fixes. When I switched to using Alpine linux specifically, this list went down to zero.

Efficiency

With the move to alpine linux, I was able to shave off around 600MB off the container size and with the use of a multi-stage build I was able to shave off a further 300MB off the build size. Currently my basic rails app (it’s still a prototype in it’s early stages) is now coming in at 92MB. That is tiny! Considering I was at close to a gigabyte prior to the change, it’s a massive change. Notably, I am also able to use a smaller compute instance size for my application. In fact, I’m able to use the smallest available compute size for cloudrun, which is where the app is currently hosted.

It’s quite happily chugging along with less than 50% resources consumed for a shared vCPU and 256 mb of ram provisioned.

Performance

Now I’m not sure how much of this is because of CloudRun, but my container cold start times are incredible. Rails boots super fast, and is running within seconds. On minimal compute power. Because of the multi-stage build and the small resource footprint the container needs, it’s able to absolutely fly when a request comes in.

What a win.

Summary

This has been a great exercise. I’ve learned a lot about security and performance, and how to make the best out of your application container images.

Got questions!? I’d love to help! Hit me up on twitter. If your question is more general, I’ll update the article! Hopefully this has helped you with your multi-stage builds or at least shown some of the performance you can get from investing a little time and thought into your applications containers.